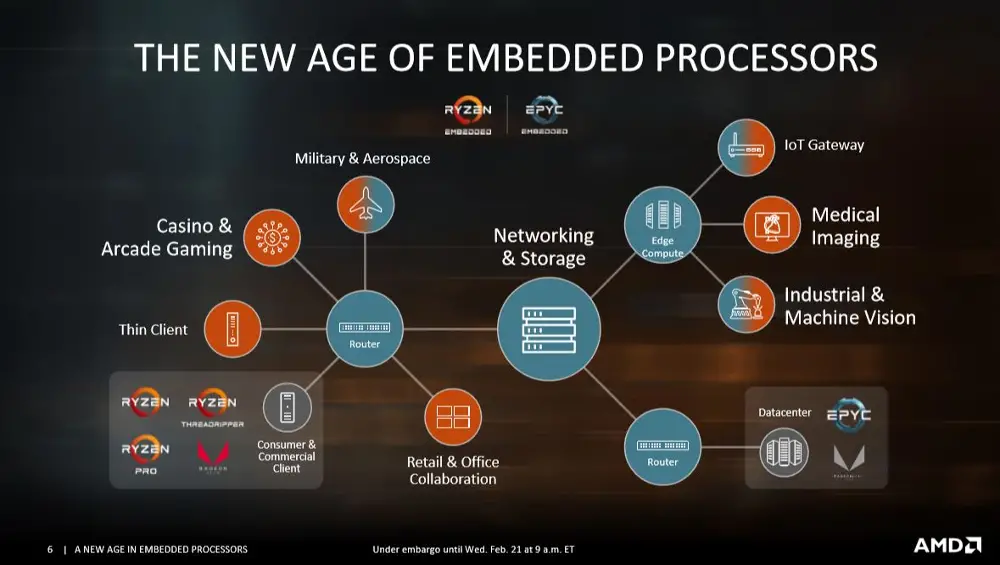

AMD launches EPYC Embedded 3000 and Ryzen Embedded V1000 SoCs

Exactly two weeks after Intel launched Xeon D-2100 series of low-power edge/embedded microprocessors, AMD is launching their competing lineup.

Two new embedded processor families are being introduced – the EPYC Embedded 3000 processor and the AMD Ryzen Embedded V1000 processor.

EPYC Embedded 3000

![]() The EPYC Embedded 3000 series (formerly codename Snowy Owl) is aimed at the networking, storage, and edge computing devices. Those parts are based on AMD’s standard Zeppelin die and range from four to sixteen cores with both single-thread and multi-thread configurations. Those processors are effectively succeeding AMD’s previous AMD Opteron A1100 series of SoC which were actually based on ARM. The new parts have up to 64 PCIe lanes but being geared toward networking and related markets, AMD has enabled support for up to eight channels of 10 GbE. Eight new SKUs were launched ranging from 30 to 100 Watts of TDP and grouped in pairs that come with and without simultaneous multithreading support.

The EPYC Embedded 3000 series (formerly codename Snowy Owl) is aimed at the networking, storage, and edge computing devices. Those parts are based on AMD’s standard Zeppelin die and range from four to sixteen cores with both single-thread and multi-thread configurations. Those processors are effectively succeeding AMD’s previous AMD Opteron A1100 series of SoC which were actually based on ARM. The new parts have up to 64 PCIe lanes but being geared toward networking and related markets, AMD has enabled support for up to eight channels of 10 GbE. Eight new SKUs were launched ranging from 30 to 100 Watts of TDP and grouped in pairs that come with and without simultaneous multithreading support.

| EPYC Embedded 3000 | ||||||

|---|---|---|---|---|---|---|

| Model | C/T | TDP | Base | Turbo (1C) | Memory | PCIe |

| Dual-die configuration | ||||||

| 3451 | 16/32 | 100 W | 2.15 GHz | 3 GHz | 4 × DDR4-2666 | x64 |

| 3401 | 16/16 | 85 W | 1.85 GHz | 3 GHz | 4 × DDR4-2666 | x64 |

| 3351 | 12/24 | 80 W | 1.90 GHz | 3 GHz | 4 × DDR4-2666 | x64 |

| 3301 | 12/12 | 65 W | 2.00 GHz | 3 GHz | 4 × DDR4-2666 | x64 |

| Single-die configuration | ||||||

| 3251 | 8/16 | 50 W | 2.50 GHz | 3.1 GHz | 2 × DDR4-2666 | x32 |

| 3201 | 8/8 | 30 W | 1.50 GHz | 3.1 GHz | 2 × DDR4-2133 | x32 |

| 3151 | 4/8 | 45 W | 2.70 GHz | 2.9 GHz | 2 × DDR4-2666 | x32 |

| 3101 | 4/4 | 35 W | 2.10 GHz | 2.9 GHz | 2 × DDR4-2666 | x32 |

You may have noticed that we grouped the SKUs into dual-die and single-die configuration. This is because those parts, unlike their bigger counterparts (i.e., EPYC and Threadripper), do come in either up to 8 cores in a single-die or more than 8 cores in a dual-die configuration. The single-die configuration uses a single-chip module SP4r4 package whereas the dual-die configuration uses a multi-chip module SP4 package. Both packages are ball grid arrays (BGAs) and are pin-compatible with each other. We don’t have a picture of the package but they are considerably smaller than the SP3 package that is used for the normal EPYC chips.

As with all of AMD’s recent enterprise parts, the 3000-series models also support the Secure Memory Encryption (SME) extension as well as its subset extension Secure Encrypted Virtualization (SEV) which offers support for physical memory encryption. Because of the type of applications those parts go into, product availability is set for up to 10 years, offering lengthy lifecycle support.

The die configuration plays a big role in defining the features available for each SKU. Each die has support for up to 512 GiB of dual-channel DDR-2666 memory, meaning the dual-die configurations will sport twice that amount (i.e., 1 TiB of quad-channel DDR4-2666). Note that those rates are for 1 DIMM per channel. Other configurations have lower rates. Likewise, each die has access to up to 32 PCIe with 64 PCIe lanes offered for the dual-die SKUs.

One area of concern we’ve pointed out when Intel introduced their Xeon D-2100 series was the lack of low power SKUs. The Broadwell-based Xeon Ds were almost exclusively in the 20-60W range while the new Skylake-based Xeon Ds are entirely above 65 W. AMD’s new embedded processors seem to be taking advantage of this void in Intel’s new lineup with a number of parts between the 35 and 65 W. Now, it’s important to note that Intel has not discontinued the older D-1500, so they still complement the new parts, but AMD has introduced new models that offer highly competitive alternative models. While the comperison is largely asymmetrical, we do think AMD has introduced some very attractive sweet spot SKUs such as the EPYC Embedded 3301, a $450 12-core/12-thread SKU with 65 W TDP and all the dual-die features such as quad-memory support and 64 PCIe lanes.

Configurable I/O

With the introduction of the Xeon D-2100, Intel introduced 20 additional configurable high-speed I/O (HSIO) lanes that can be configured as either PCIe, SATA, or USB (or any valid combinations of those).

Since each Zeppelin die has a highly configurable set of I/Os, AMD has exposed some of this configurability to the system designers in order to allow for higher design flexibility very similar to what Intel has done with their Xeon D models.

The single-die models can have up to 32 PCIe lanes that are MUX’ed with the SATA and GbE ports and can be configured as a mixed combination of those. Those models can be configured as either 32 PCIe lanes or a combination of PCIe lanes and up to 8 SATA ports and up to 4 x 10GbE ports depending on the application of the device. Likewise, for the models which incorporate two dies, this is increased to 64 PCIe lanes that can be configured as up to 16 SATA ports and up to 10 x 10GbE ports.

Ryzen Embedded V1000

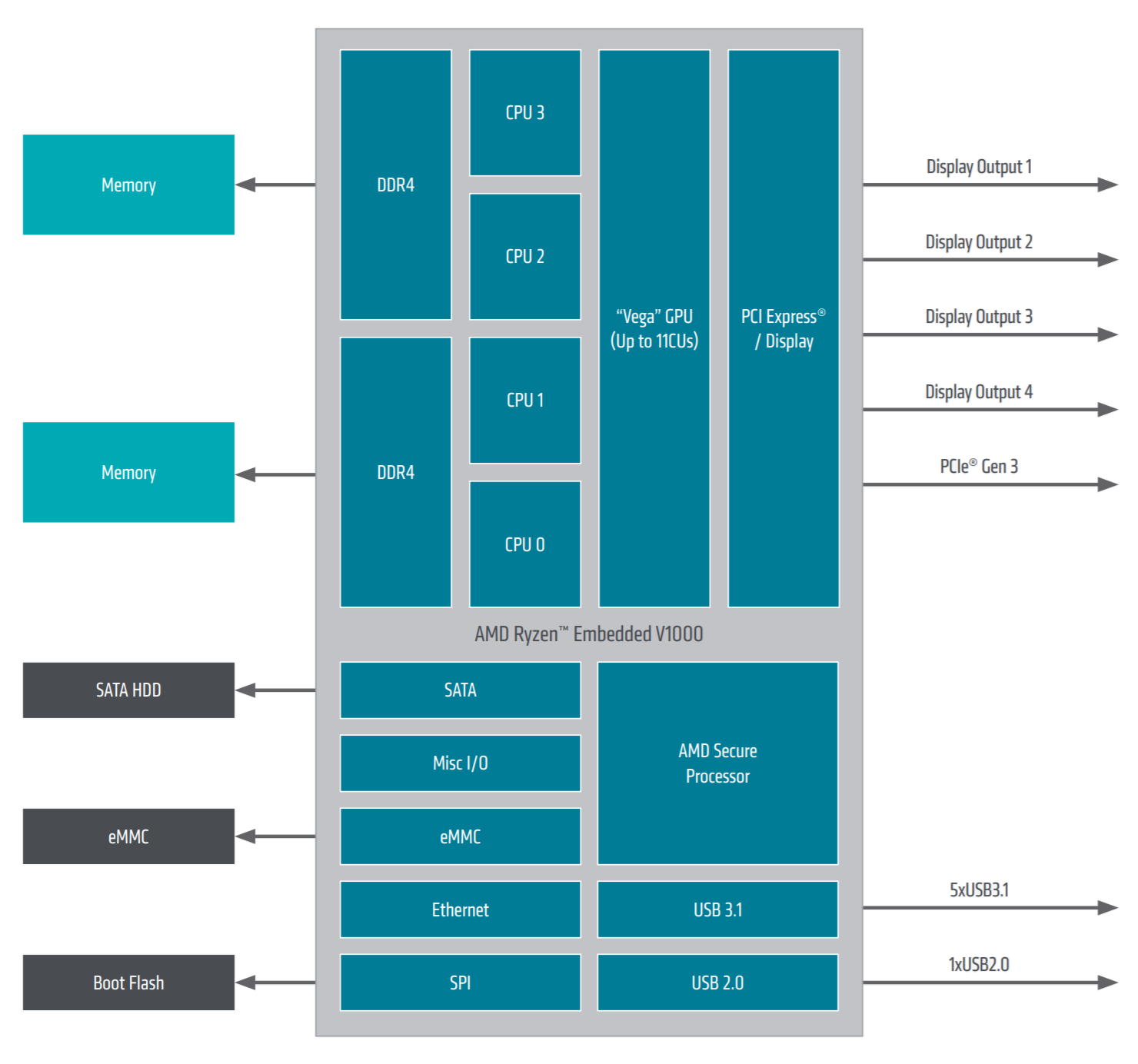

![]() The second series introduced is the Ryzen Embedded V1000 series (codename Great Horned Owl) is aimed at medical imaging, industrial systems, digital gaming and thin clients. Those processors are based on AMD’s APU die which incorporate up to four Zen cores along with their Vega graphics processors. The new V1000 series is effectively succeeding AMD’s prior R-series parts (Merlin Falcon based on Excavator). Four Embedded V1000 parts were launched:

The second series introduced is the Ryzen Embedded V1000 series (codename Great Horned Owl) is aimed at medical imaging, industrial systems, digital gaming and thin clients. Those processors are based on AMD’s APU die which incorporate up to four Zen cores along with their Vega graphics processors. The new V1000 series is effectively succeeding AMD’s prior R-series parts (Merlin Falcon based on Excavator). Four Embedded V1000 parts were launched:

| Ryzen Embedded V1000 | |||||

|---|---|---|---|---|---|

| Model | C/T | TDP | Base | Turbo (1C) | Memory |

| V1807B | 4/8 | 45 W | 3.35 GHz | 3.8 GHz | 2 × DDR4-3200 |

| V1756B | 4/8 | 45 W | 3.25 GHz | 3.6 GHz | 2 × DDR4-3200 |

| V1605B | 4/8 | 15 W | 2.0 0GHz | 3.6 GHz | 2 × DDR4-2400 |

| V1202B | 2/4 | 15 W | 2.30 GHz | 3.2 GHz | 2 × DDR4-2400 |

The two 45 W TDP SKUs also have a configurable TDP-down of 35 W and a configurable TDP-up of 54 W. For the low-power 15 W TDP SKUs, they have a cTDP-down of 12 W and a cTDP-up of 25 W.

All models support dual channel DDR4 memory with the higher-end models sporting data rates of up to 3,200 MT/s for 1 DIMM per channel (lower rates for other configs). It’s worth noting that AMD has been bumping up the specifications of their memory controller from release to release at very nice rates. For example, original Raven Ridge parts such as the Ryzen 7 2700U release back in October of last year only supported up to 2400 MT/s. The Ryzen 3 2200G which was released just a month ago supports up to 2933 MT/s. The newly introduced Ryzen Embedded V1807B and V1756B parts now support up to 3200 MT/s which is also the max bin data rate specified by the current JEDEC standard. Due to how the Zen microarchitecture is designed, higher memory data rates is very advantageous for the performance of the chip.

Targeting embedded devices, those parts are equipped with 16 PCIe lanes, dual 10 Gigabit Ethernet, and four USB 3.1 Gen ports (two of which can be configured as Type-C with DisplayPort Alternate Mode PD). As with the EYPC embedded series, the Ryzen Embedded models can reconfigure some of the PCIe lanes as SATA ports (up to 2 ports).

In the area of security, those new Ryzen Embedded parts also support Secure Memory Encryption (SME) support as well as its subset extension Secure Encrypted Virtualization (SEV).

Graphics

All models feature integrated Vega graphics with various number of Compute Units (CUs) which ultimately determine the theoretical peak performance of the GPU. Note that the performance is listed in 16-bit half precision FLOPS.

| Ryzen Embedded V1000 GPU | ||||

|---|---|---|---|---|

| Model | CUs | SPs | GPU Clock | Performance (HP) |

| V1807B | 11 | 704 | 1.3 GHz | 3.661 teraFLOPS |

| V1756B | 8 | 512 | 1.3 GHz | 2.662 teraFLOPS |

| V1605B | 8 | 512 | 1.1 GHz | 2.253 teraFLOPS |

| V1202B | 3 | 192 | 1.0 GHz | 768 gigaFLOPS |

All models support up to four displays in 4K resolution via DisplayPort 1.4, embedded DisplayPort (eDP) 1.4, and/or HDMI 2.0b. As with the other consumer Raven Ridge parts, the Vega GPU have hardware acceleration for H.265 decode and 8-bit encode as well as VP9 decode at 4K resolution at 60 FPS.

–

Spotted an error? Help us fix it! Simply select the problematic text and press Ctrl+Enter to notify us.

–