Huawei Expands Kunpeng Server CPUs, Plans SMT, SVE For Next Gen

Last week Huawei expanded their Kunpeng server CPU family with more models and new servers.

Kunpeng

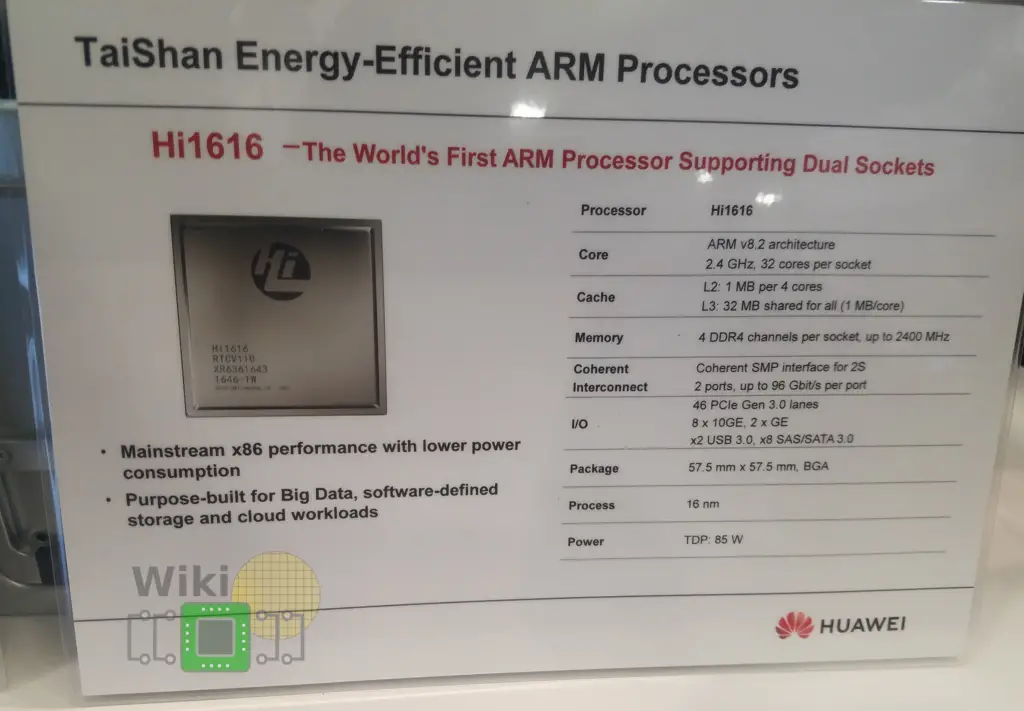

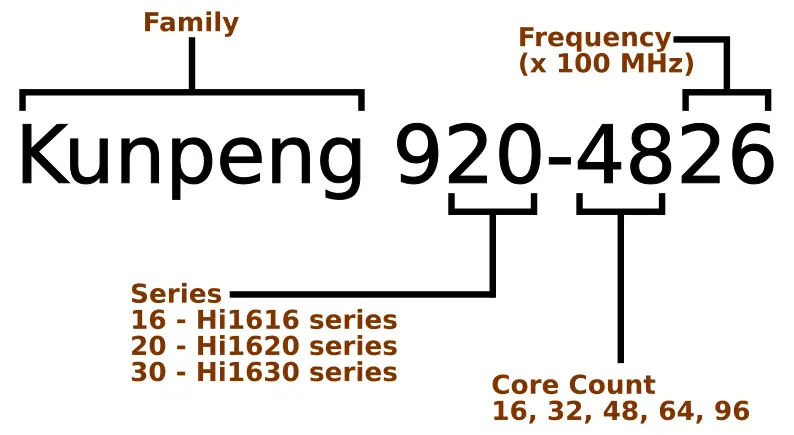

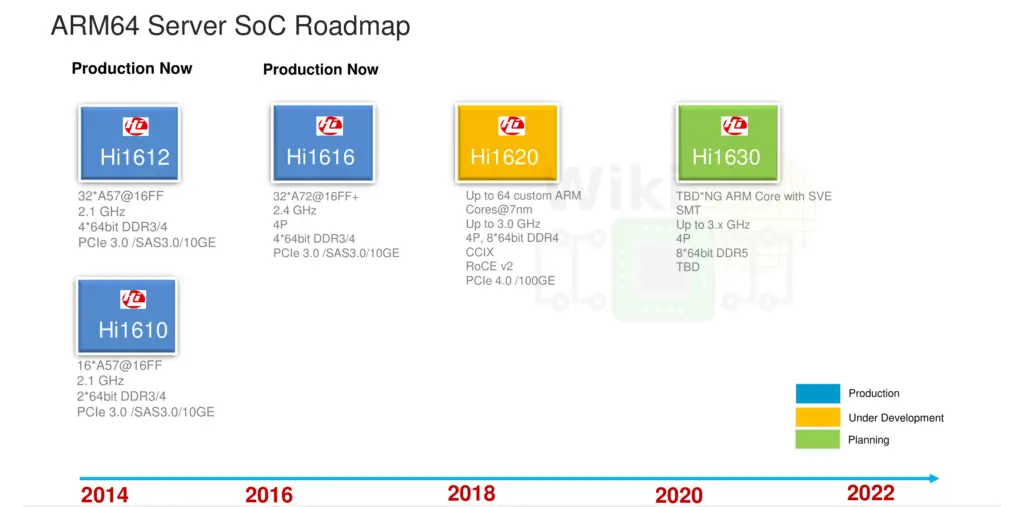

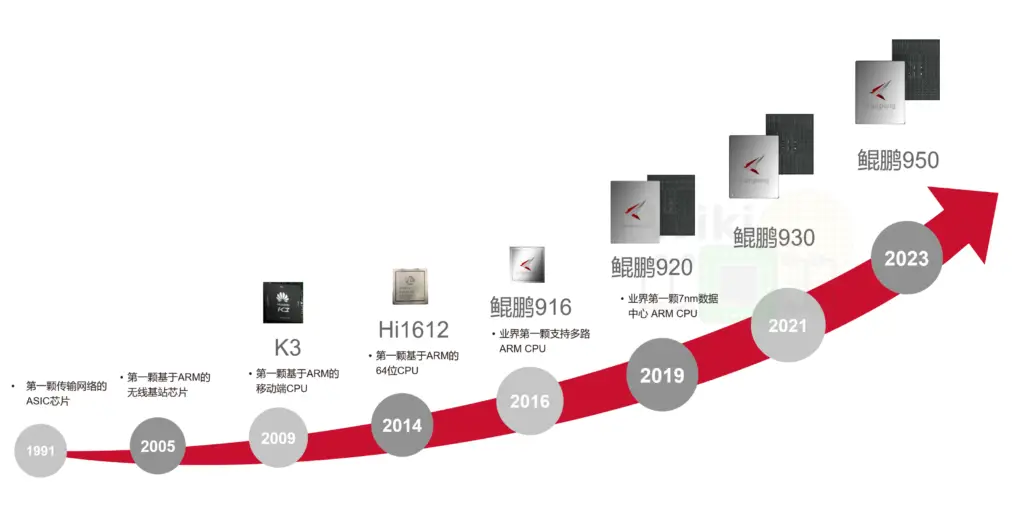

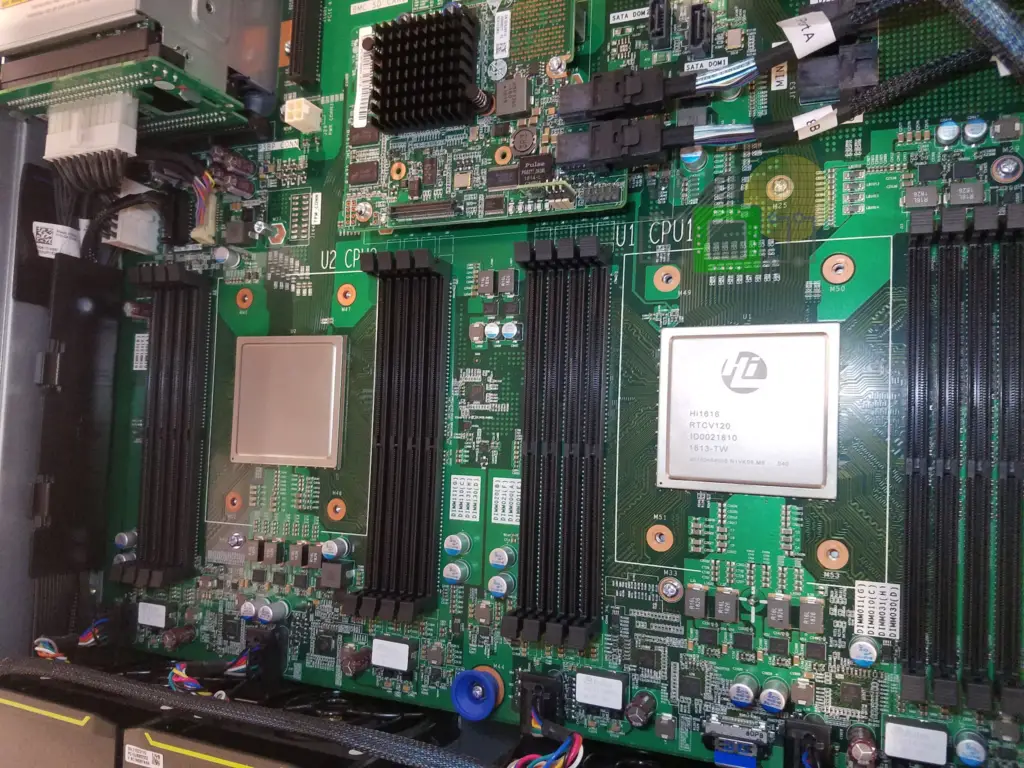

For our readers that don’t follow Huawei as closely, the Kunpeng was previously known as the HiSilicon Hi16xx series. The 32-core Hi1616 which was based on the Arm Cortex-A72 is now known as the Kunpeng 916. The company’s latest chip, the HiSilicon Hi1620, is now known as the Kunpeng 920. Late next year, Huawei will announce their next-generation chip, the Hi1630 which will go under the name Kunpeng 930.

Kunpeng 920 and the TaiShan v110 Core

Huawei’s HiSilicon latest chip is the Kunpeng 920. This 920 series is based on the company’s own custom TaiShan V110 Core. In case you are wondering, the V100 was the semi-custom Cortex-A72 core in the Hi1616.

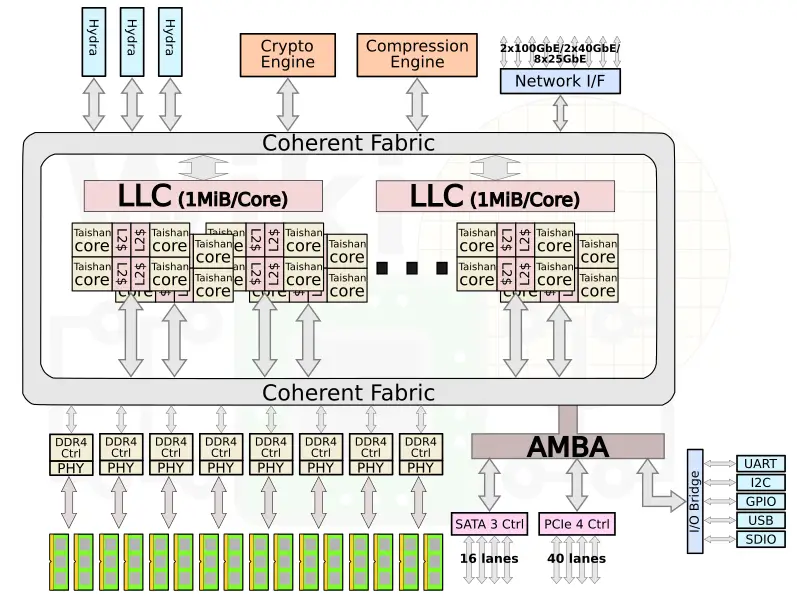

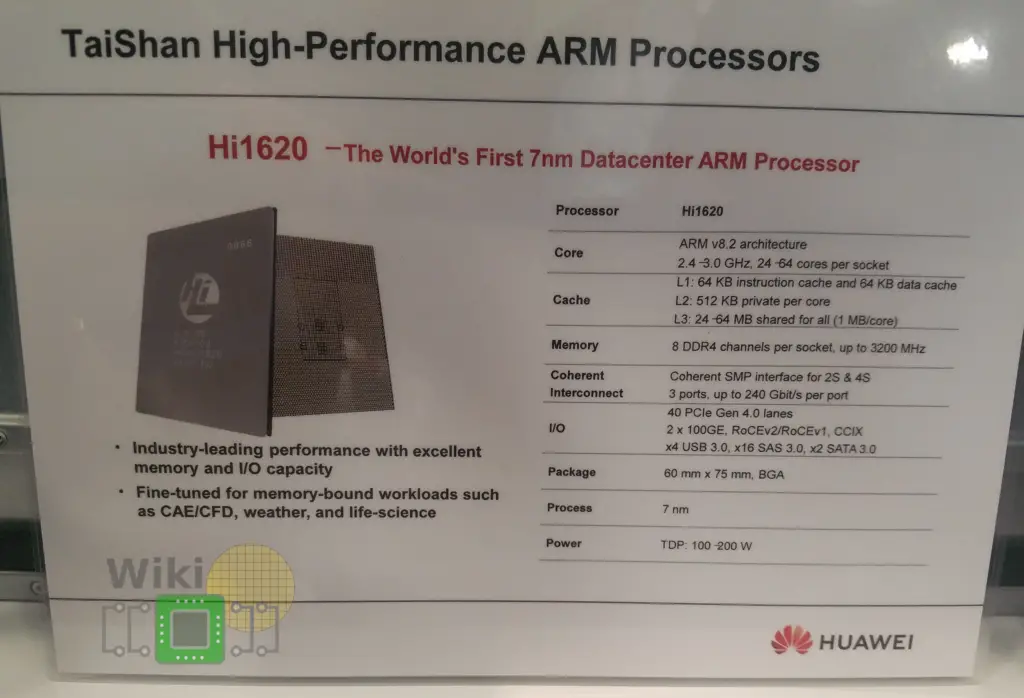

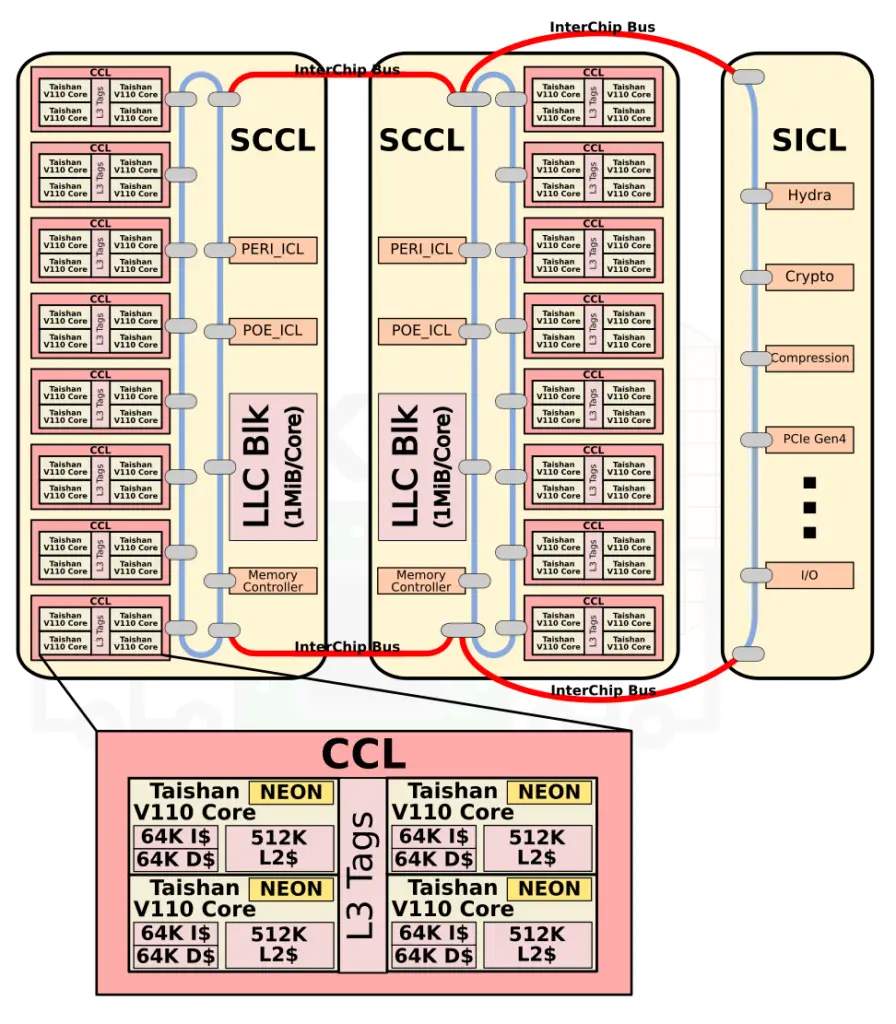

As far as the TaiShan V110 core goes, the details are still vague but we are working on obtaining more info. Each core is a 4-way out-of-order superscalar that implements the ARMv8.2-A ISA. Actually, Huawei told us the core supports almost all the ARMv8.4 features with a few exceptions, including dot product and the FP16 FML extension. It features private 64 KiB L1 instruction and data caches as well as 512 KiB of private L2. Though light on details, Huawei says that compared to Arm’s Cortex cores, their core features an improved memory subsystem, a larger number of execution units, and a better branch predictor. Fabricated on TSMC’s 7-nanometers HPC process technology and packing around 20 billion transistors (over multiple dies), the full chip incorporates up to 64 such cores organized as sixteen quadplexes. There is a last level cache of 1 MiB per core which is shared by all the cores. As for the integrated memory controller, the SoC supports up to eight channels of DDR4 memory supporting rates of up to 2933 MT/s.

By the way, even though this is a general-purpose server chip, Huawei also pitched it as an HPC chip on multiple occasions. At least with the current design, this is a bit problematic. The TaiShan V110 cores feature a single 128-bit ASIMD unit. It is capable of executing single double-precision FMA vector instruction per cycle or two single-precision vector instructions per cycle. This makes it quite an inferior choice for dense SIMD applications, especially those that use double precision floating points.

The chip incorporates a number of hardware accelerators – a compression engine (GZIP, LZS, LZ4) capable of up to 40 Gib/s compress and 100 Gbit/s decompress and a crypto offload engine (for AES, DES, 3DES, SHA1/2, etc..) capable of throughputs up to 100 Gbit/s. As far as I/O goes, the chip includes 40 lanes of PCIe 4 with CCIX support, 4 USB 3.0, 2x SATA 3.0, x8 SAS 3.0, and an assortment of 10/25/100 GbE ports.

| High-Level Microarchitecture Analysis | ||||

|---|---|---|---|---|

| Cavium | HiSilicon | AMD | Intel | |

| Micro Arch | Vulcan | TaiShan V110 | Zen | Skylake |

| Pipeline | 13-15 | ? | 15-19 | 14-19 |

| L1I$ | 32 KiB 8-way | 64 KiB | 64 KiB 4-way | 32 KiB 8-way |

| L1D$ | 32 KiB 8-way | 64 KiB | 32 KiB 8-way | 32 KiB 8-way |

| L2$ | 256 KiB 8-way | 512 KiB | 512 KiB 8-way | 1 MiB 16-way |

| Fetch | 32B/cycle | ? | 32B/cycle | 16B/cycle |

| Decode | 4-Way | 4-Way | 4-Way | 5-Way |

| ROB Size | 180 | ? | 192 | 224 |

| Scheduler | Unified (60) | ? | Split Int (6×14)/FP (96) | Unified (97) |

| Issue | 6 | ? | 10 (6+4) | 8 |

| L3$ | 1 MiB/core | 1 MiB/core | 2 MiB/core | 1.375 MiB/core |

Multi-chip Design

Though Huawei has been keeping a tight lip on the chip design itself, the Hi1620 is actually a multi-chip design. Actually, we believe are three dies. The chip itself comprise two compute dies called the Super CPU cluster (SCCL), each one packing 32 cores. It’s also possible the SCCL only have 24 cores, in which case there are three such dies with a theoretical maximum core count of 72 cores possible but are not offered for yield reasons. Regardless of this, there are at least two SCCL dies for sure. Additionally, there is also an I/O die called the Super IO Cluster (SICL) which contains all the high-speed SerDes and low-speed I/Os.

In each SCCL die there are 6 (or 8) CPU Clusters (CCLs), memory controllers, and the L3 cache block. The CCLs are TaiShan V110 quadplex along with the L3 cache tags partition. The Super IO Clusters include the various I/O peripherals including PCIe Gen 4, SAS, the network interface controllers, and the Hydra links.

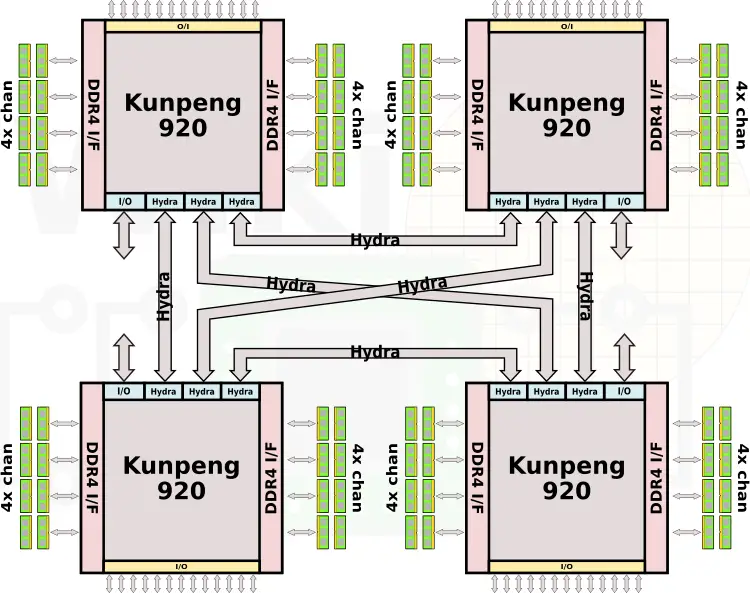

Multiprocessing

Targeting the server market, the Kunpeng 920 chips have both 2-way and 4-way multiprocessing support. As noted above, the SICL contains three Hydra interface links – each one having a peak bandwidth of 240 Gbps. Currently, the only other Arm server on the market with multiprocessing support is the Cavium ThunderX2 based on the Vulcan microarchitecture. It’s worth noting that Ampere plans on adding 2-way SMP support for sometimes this year.

| 2-Way Multiprocessing Comparison | |||||

|---|---|---|---|---|---|

| Cavium | Ampere | HiSilicon | AMD | Intel | |

| Micro Arch | Vulcan | Skylark | TaiShan v110 | Zen | Skylake |

| Interconnect | CCPI2 24 x 25 Gbps 600 Gb/s |

N/A

|

Hydra 3 links x 240 Gbps 720 Gb/s |

IF 304 Gb/s (die) 1214 Gb/s (socket)1 |

UPI 3 links x 340 Gbps 1,018 Gb/s |

| Max Cores | 64 | 128 | 64 | 562 | |

| Max Threads | 256 | 128 | 128 | 112 | |

| Max Channels | 16 × DDR4-2666 | 16 × DDR4-2933 | 16 × DDR4-2666 | 12 × DDR4-2666 | |

| Max Memory | 4 TiB | 2 TiB | 4 TiB | 4 TiB | |

| PCIe Lanes | 112 | 64 | 128 | 96 | |

- 1 = AMD’s Infinity Fabric v1 operates at MEMCLK. Numbers reflect that of the highest-supported memory (DDR4-2666, MEMCLK = 1333.33 MHz)

- 2 = Intel offers ultra-high density 112-core servers based on Cascade Lake AP but we do not consider them in the same class

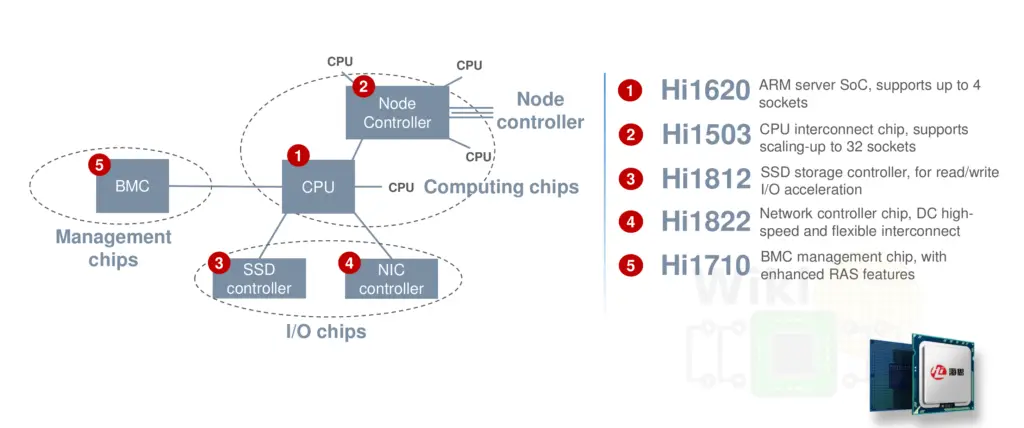

Homegrown Platform

As part of the Kunpeng 920 platform, HiSilicon develops the entire platform chipset as well. That includes the Hi1620 CPUs, Hi1503 node controller, Hi1812 SSD controller, Hi1822 NIC, and the Hi1710 BMC.

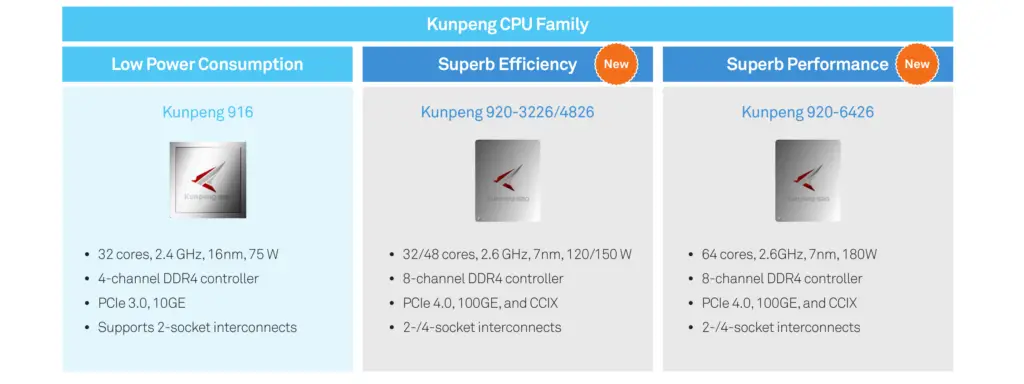

Current Lineup

Huawei stated that the Hi1620 is expected to be binned to cover the full server spectrum – from 64 cores down to 16 cores ranging from 100 W to 200 W. It’s important to note that there are no lower power SKUs, for that, Huawei will continue to offer the Kunpeng 916 based on the Cortex-A72 and goes up to 80 W. At least for now, there are only three publicly disclosed SKUs.

| Kunpeng 920-Series Lineup | ||||

|---|---|---|---|---|

| Model | Cores | L3 | Frequency | TDP |

| Kunpeng 920-6426 | 64 | 64 MiB | 2.6 GHz | 180 W |

| Kunpeng 920-4826 | 48 | 48 MiB | 2.6 GHz | 150 W |

| Kunpeng 920-3226 | 32 | 32 MiB | 2.6 GHz | 120 W |

Next-Gen Kunpeng 930

Looking out into the 2021 timeframe, their next-generation chip is the Hi1630 which will be branded as the Kunpeng 930. Though too early for the final specs, this chip is expected to feature a higher-performance core with higher frequencies, simultaneous multithreading support, and Arm’s Scalable Vector Extension (SVE) extension. Additionally, it is also expected to move to DDR5 memory.

Derivative WikiChip Pages: TaiShan Core, Kunpeng 920.

–

Spotted an error? Help us fix it! Simply select the problematic text and press Ctrl+Enter to notify us.

–