Bitcoin giant Bitmain enters the AI market with the BM1680 neural processor

Bitmain Technologies, a company well-known for their ASIC bitcoin mining chips, has recently announced it has started sampling its first machine-learning accelerator. Today, at the AIWORLD 2017 Artificial Intelligence Conference in Beijing, Bitmain’s founder and CEO Micree Zhan was invited to give a keynote speech on AI computing. Along with the speech, Bitmain officially launched their new neural processor – the Sophon BM1680. The company also introduced accelerator cards based on the chip and a complete analytics server. Everything will be sold under the new Sophon brand name.

Bitmain Technologies, a company well-known for their ASIC bitcoin mining chips, has recently announced it has started sampling its first machine-learning accelerator. Today, at the AIWORLD 2017 Artificial Intelligence Conference in Beijing, Bitmain’s founder and CEO Micree Zhan was invited to give a keynote speech on AI computing. Along with the speech, Bitmain officially launched their new neural processor – the Sophon BM1680. The company also introduced accelerator cards based on the chip and a complete analytics server. Everything will be sold under the new Sophon brand name.

The Chip

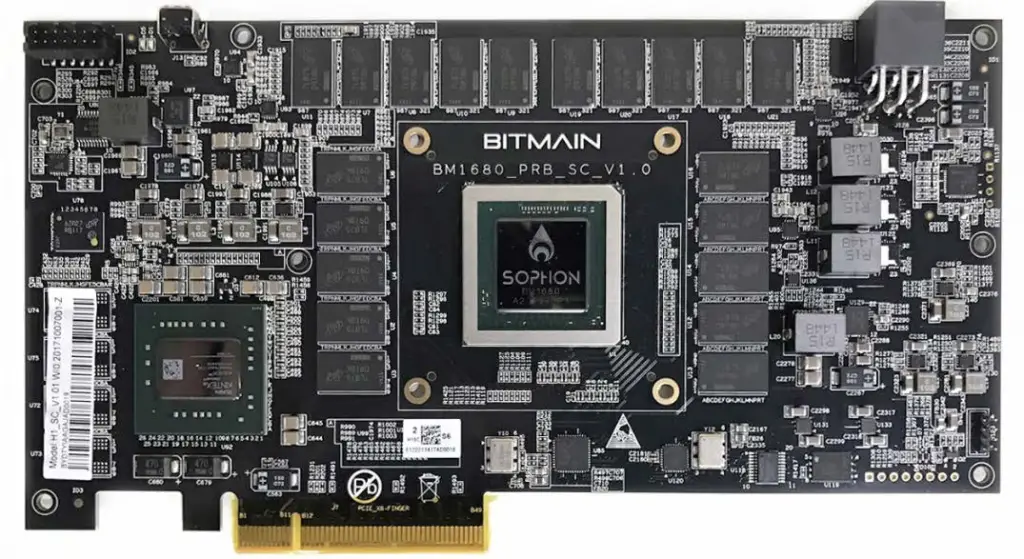

Bitmain started exploring the field of artificial intelligence and neural processors as early as 2015. By April of this year the BM1680 has taped-out. The Sophon BM1680 is a 41W TDP custom design neural processor manufactured on TSMC’s 28nm HPC+ process. Bitmain claims the chip is designed not only for inference, but also for training of neural networks, suitable for working with the common ANNs such as CNN, RNN, and DNN. With that regard it is similar in application to Intel’s Nervana NNP which was also recently announced.

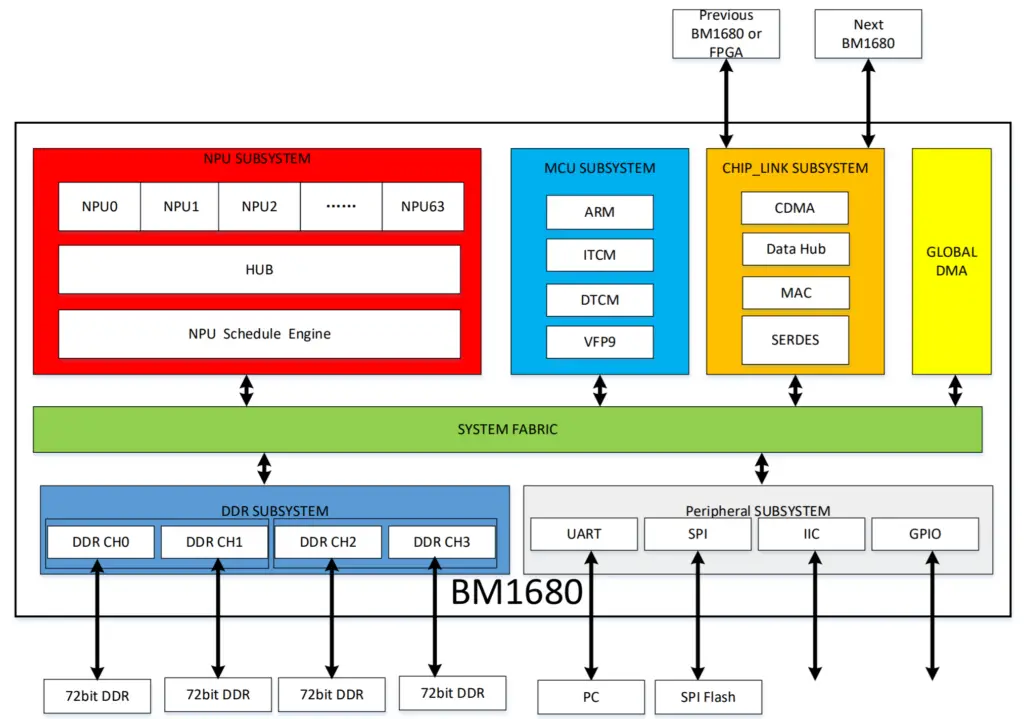

At the heart of the chip is the NPU subsystem which consists of 64 NPUs, the hub, and an NPU Schedule Engine. The scheduling engine is in charge of controlling the data flow to the individual NPUs. Bitmain has not disclosed the intimate details of the NPU cores but we do know it has 512 KiB of program-visible SRAM and supports 64 single-precision operations. With a total of 64 NPUs, the chip has a total of 32 MiB of cache and a peak performance of 2 TFLOPS (single-precision). The company stated the chip is capable of 80 billion algorithmic operations per second, but since we don’t know the exact meaning of those operations, it’s impossible to compare that number to existing chips.

In addition to the embedded ARM microcontroller and the typical peripheral subsystem, the chip has a beefed up memory subsystem which supports up to 16 GiB of quad-channel DDR4-2667 with ECC support for a total bandwidth of 85.34 GB/s. This memory is used to store network information and neuron data.

Multiprocessing

The BM1680 is actually a multiprocessor supporting multiple chips connected together to act as a single unit. The chip integrates what Bitmain calls the “BMDNN Chip link”, a low-latency high-speed SerDes link with a total bandwidth of 50 GB/s.

Accelerator Cards

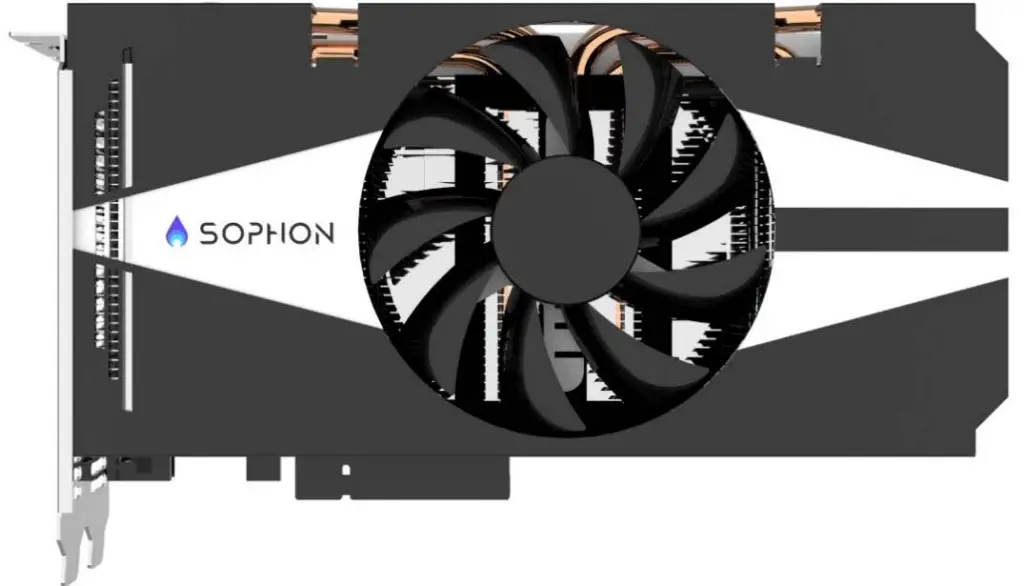

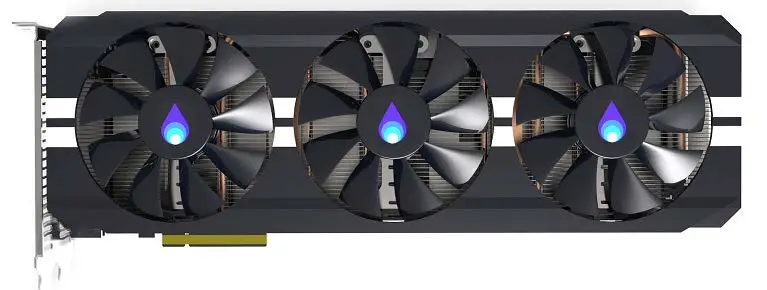

As with their Bitcoin business, Bitmain plans on introducing complete servers based on the BM1680. Two initial SKUs are introduced, the Sophon SC1 and the SC1+. Both are PCIe 3.0 x8 deep learning acceleration cards with cooling fans. The SC1 includes a single BM1680 chip while the SC2 includes two. Both cards come with a Xilinx Kintex FPGA used to receive the data from the host processor (typically an Intel Xeon) and distribute it across available nodes.

Servers

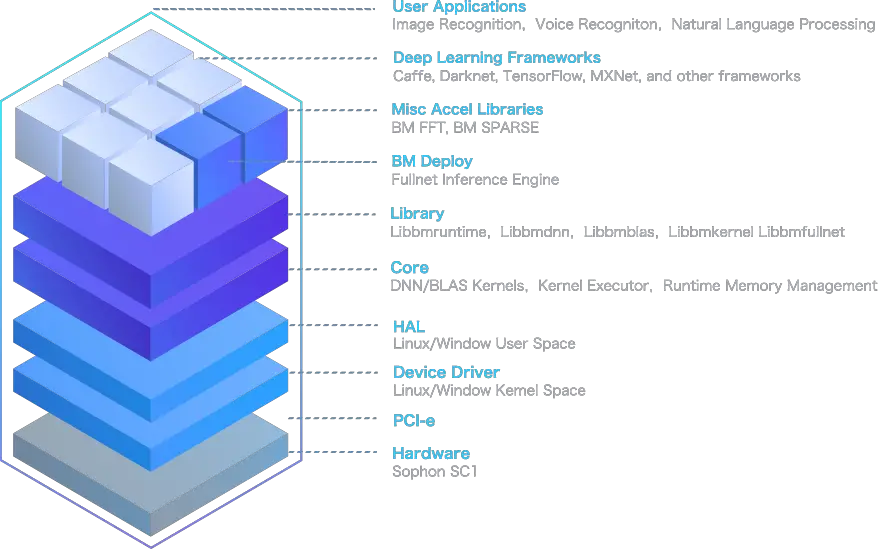

The final product Bitmain launched is what the company calls the intelligent video and image analysis server system – the Sophon SS1. The SS1 comes preinstalled with Ubuntu and includes Bitmains entire AI software stack including runtime libraries and sample code. Bitmain says users can expect to achieve operations such as human face detection and video analysis capabilities out-of-the-box. The Sophon SS1 will feature an Intel Xeon E3-1275 v6Â operating at 3.8 GHz with a turbo boost of 4.2 and 2 Sophon SC1+ cards for a total of 4 BM1680s.

| OS | Ubuntu 16.04 |

| Toolkit | Preloaded software environment, including firmware, drivers, BMDNN libs, runtime libs and other related packages. |

| Test sample codes | Preloaded models and testcase codes for Object Detection & Recognition |

| CPU | Intel E3-1275 v6, 4 Cores, 3.8GHz (Max Turbo 4.2GHz) |

| DL accelerating card | 2x SC1+ |

| System power | 800W |

| System memory | 2x 8G DDR4/2133-ECC (Max 64GB) |

| Storage | 250G 2.5 inch SATA3 solid state disk (Max 6 SATA disks) |

| Network interface | 2x GLAN (1000M/100M self-adaptation) |

| Peripheral | 4x USB3.0 |

| Display | 1x HDMI |

| Dimension | 380 (L) x 425(W) x 177(H) mm |

| Weight | 10Kg |

Future Roadmap

Bitmain is expected to maintain their rapid development cycle. The company expects to introduce a second-generation chip based on TSMC’s 12nm FinFET, an enhanced 16nm FinFET process which they hope will start shipping in the second half of 2018.

–

Spotted an error? Help us fix it! Simply select the problematic text and press Ctrl+Enter to notify us.

–