Intel Unveils the Tremont Microarchitecture: Going After ST Performance

Back End

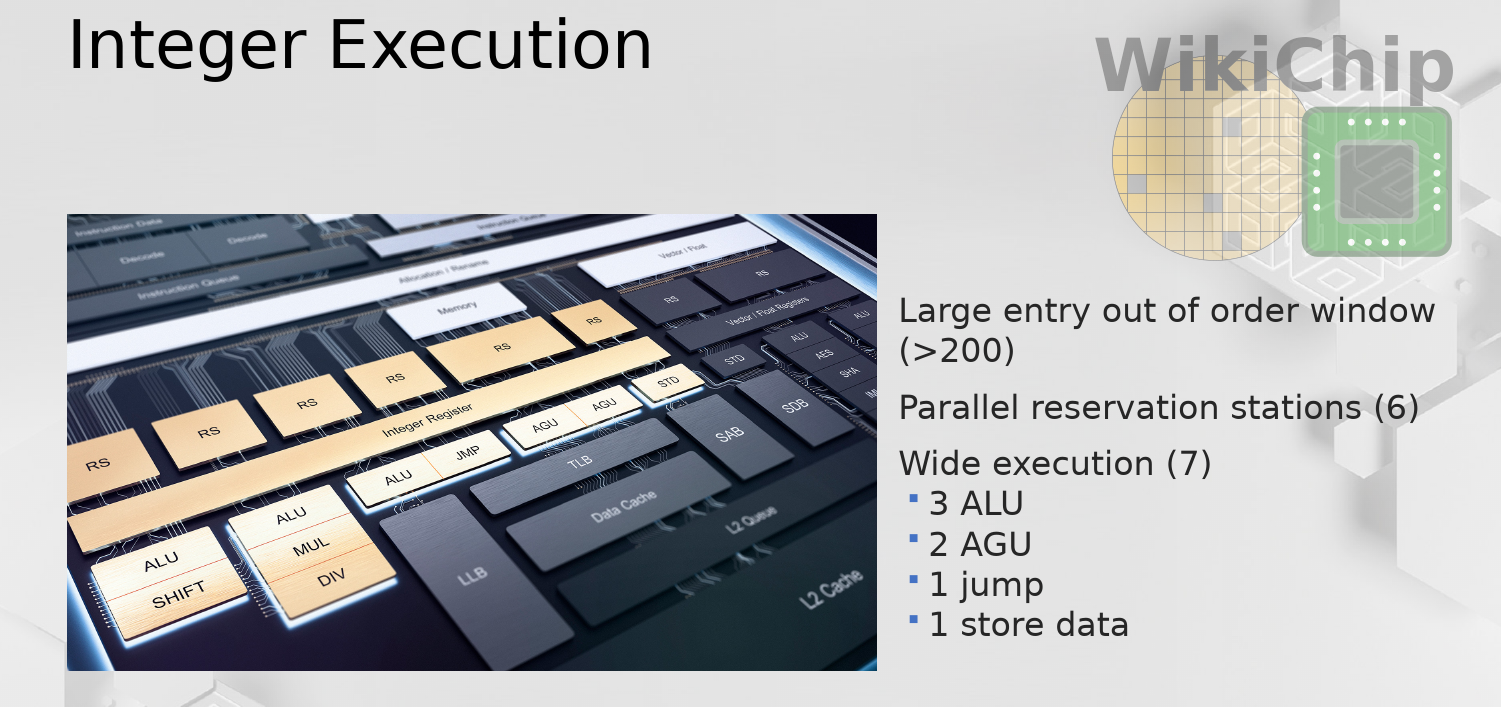

The decoded streams from each cluster sit in their own decoded instruction queues. Allocation can then pull those instructions in-order from the queues and allocate and rename them serially at the rate of four instructions per cycle. Additionally, retirement is also 4-wide. This is unchanged from Goldmont Plus. Tremont has a relatively big 208-entry deep reorder buffer. From here operations are sent to the distributed schedulers for execution.

Execution Engines

On the execution side, things have been widened as well – up to 10 operations may be dispatched each cycle from the various schedulers.

On the integer side, there are six parallel reservation stations – a unified RS for the AGUs and independent reservation stations for all the other integer operations. In total there are three for the ALUs, one for branches, and one for the pick-two AGUs.

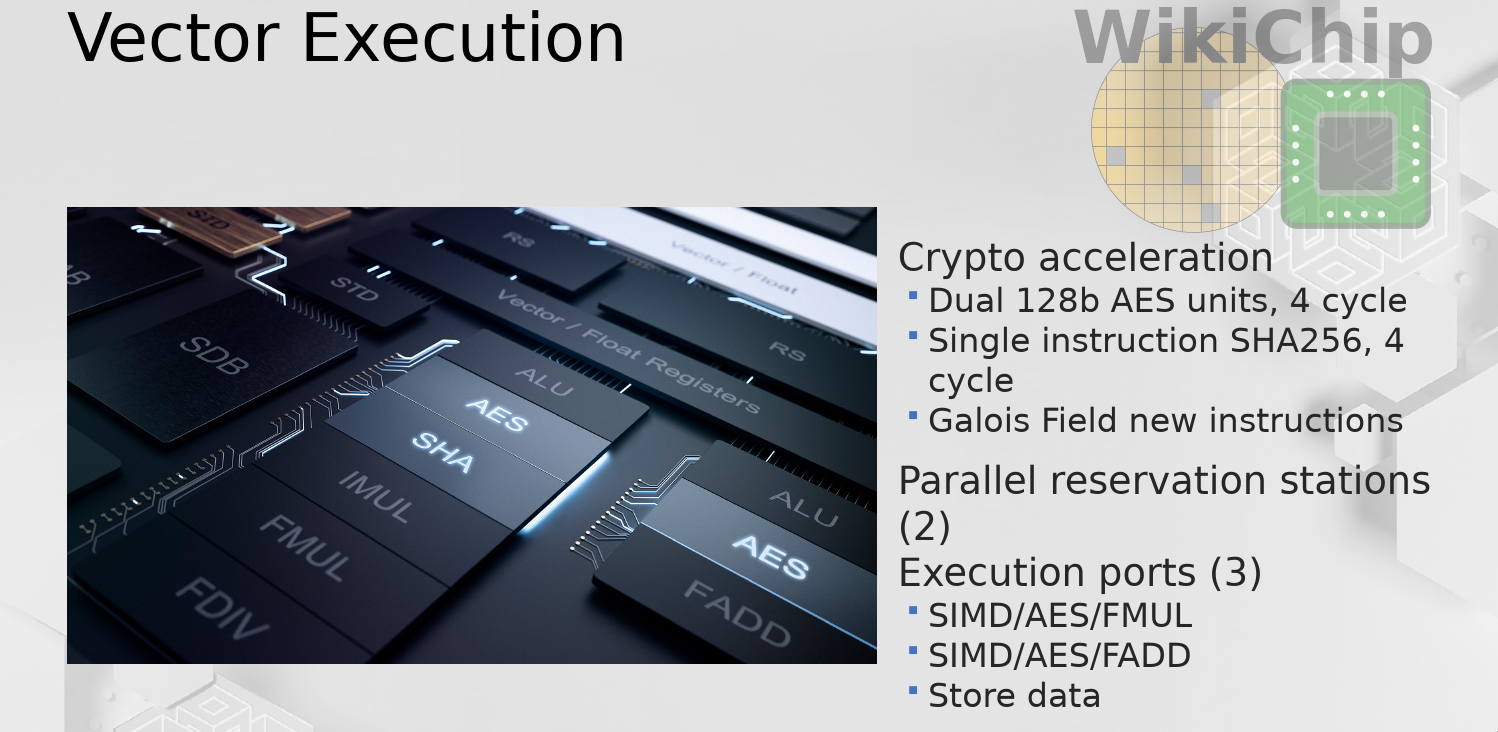

On the vector side, there are two execution pipes – each one is 16 bytes wide. There is a single floating-point multiply and a single floating-point adder. There are dual AES units, both of which are also 16B wide. Additionally, there is a single-instruction SHA-256 EU with a 4-cycle latency. By the way, it’s worth noting that along with the AVX-512 Galois Field instructions that were added in Sunny Cove, Tremont adds similar instructions but as part of the SSE extensions. The FPU side has a separate dedicated store data port which is also 16B wide sitting on its own reservation station.

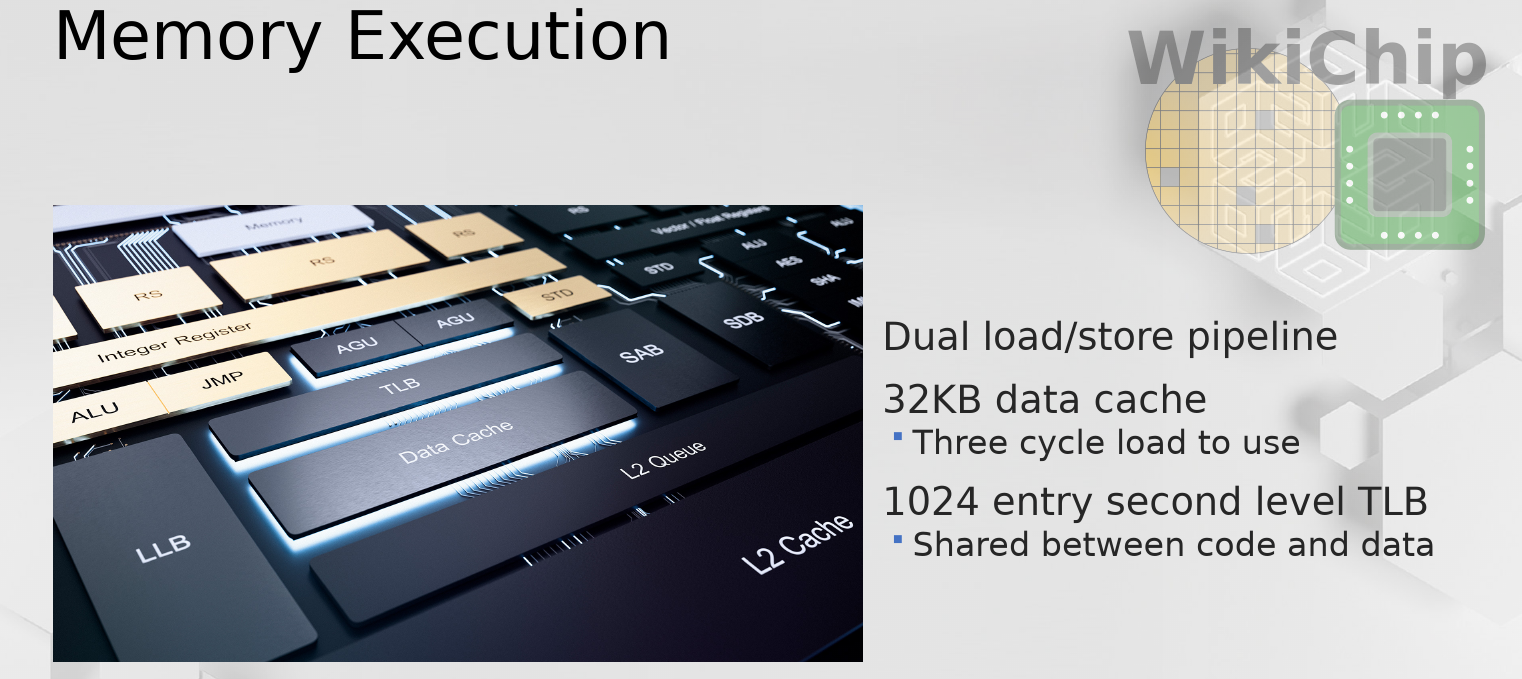

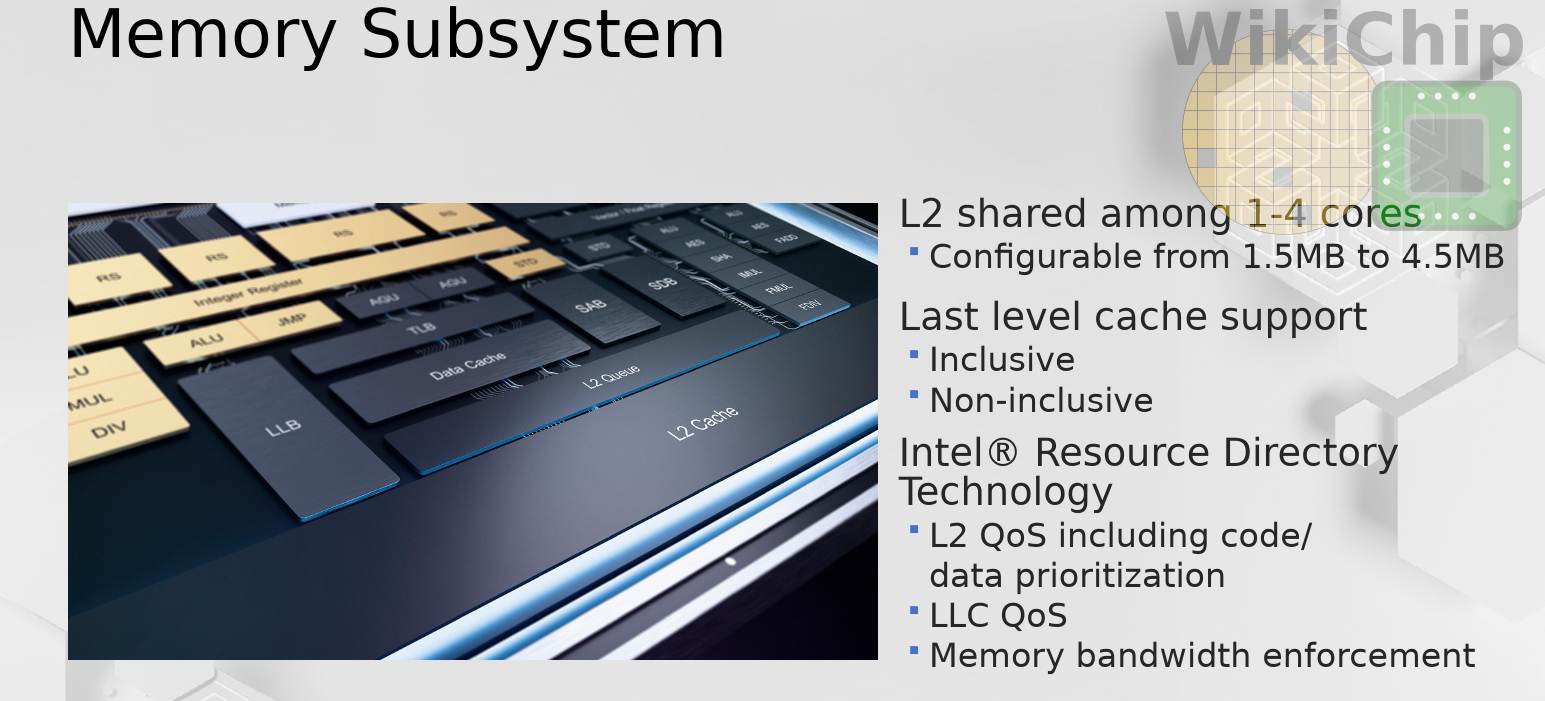

Memory Subsystem

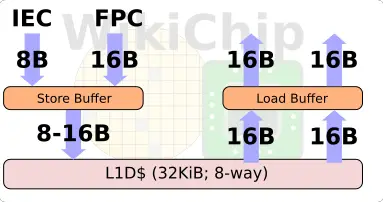

On the memory side, Tremont features two load/store pairs. There are two load/store pipelines. Each cycle, an address can be generated for two loads, two stores, or one of each. Tremont features a 32 KiB data cache which is 8-way. There is a 1K-entry L2 TLB that is shared between the instruction and data cache. There is a 3-cycle load-to-use latency for almost all loads (i.e., the majority of the x86 addressing modes with the exception of segmentation which incur one additional cycle of latency). On the integer side, one 8B store can be done each cycle while on the FPU side, one 16B store can be done each cycle. In other words, you can do 8B+16B/cycle. Note that like in Sunny Cove, a dual-load/store pipes means doing things such as cache lookup, address generation, TLB lookup simultaneously. But since there is only one L1D cache write port, ultimately just a single senior write is written per cycle to a cache line.

The L2 is shared among all the cores. Since Tremont can actually be configured with groups of one to four cores, Intel says that the typical product configuration will consist of a quad-core cluster, but this will really depend on the product. The capacity of the L2 itself is also configurable and can range between 1.5 MiB all the way to 4.5 MiB (organized as 12-way to 18-way).

Depending on the product, some products may have an additional last level cache. Tremont, as an IP, has been designed to be integrated into any of Intel’s products and fabrics (Intel actually says all). To that end, there is support for both non-inclusive and inclusive last level cache configurations with all the related hardware support (snoop filters, etc.). The reason for this is so that Tremont, as an IP, can be compatible with all of Intel’s fabrics that are used in various products. For example, the LLC behavior on the client SoC is different from that of the server. It’s worth noting that there is support for Intel’s Resource Director Technology (RDT) which is already found in the server chips and allows for various monitoring oversight such as bandwidth allocation, QoS, and prioritization.

–

Spotted an error? Help us fix it! Simply select the problematic text and press Ctrl+Enter to notify us.

–